How to Convert JSON to CSV (and Vice-Versa)

I remember the first time I needed to convert JSON to CSV. I was working on a data migration project, and the client had given me a massive JSON file with user data. They needed it in Excel. "No problem," I thought. "I'll just... wait, how do you convert JSON to CSV again?"

I spent the next hour googling, trying different Python scripts, and eventually cobbling together something that sort of worked. It was messy, and I knew there had to be a better way.

Since then, I've converted JSON to CSV (and back) more times than I can count. Sometimes it's for data analysis in Excel. Sometimes it's for importing into a database. Sometimes it's just because someone on the team doesn't speak JSON and needs a spreadsheet.

Here's everything I've learned about converting between these two formats, the gotchas to watch out for, and the tools that make it painless.

Why Convert Between JSON and CSV?

Before we dive into the how, let's talk about the why. These formats serve different purposes:

JSON is great for:

- APIs and web applications

- Nested, hierarchical data

- When you need to preserve data types (numbers, booleans, null)

- Complex data structures with arrays and objects

CSV is great for:

- Spreadsheet applications (Excel, Google Sheets)

- Simple, flat data tables

- Data analysis and reporting

- When non-technical users need to work with the data

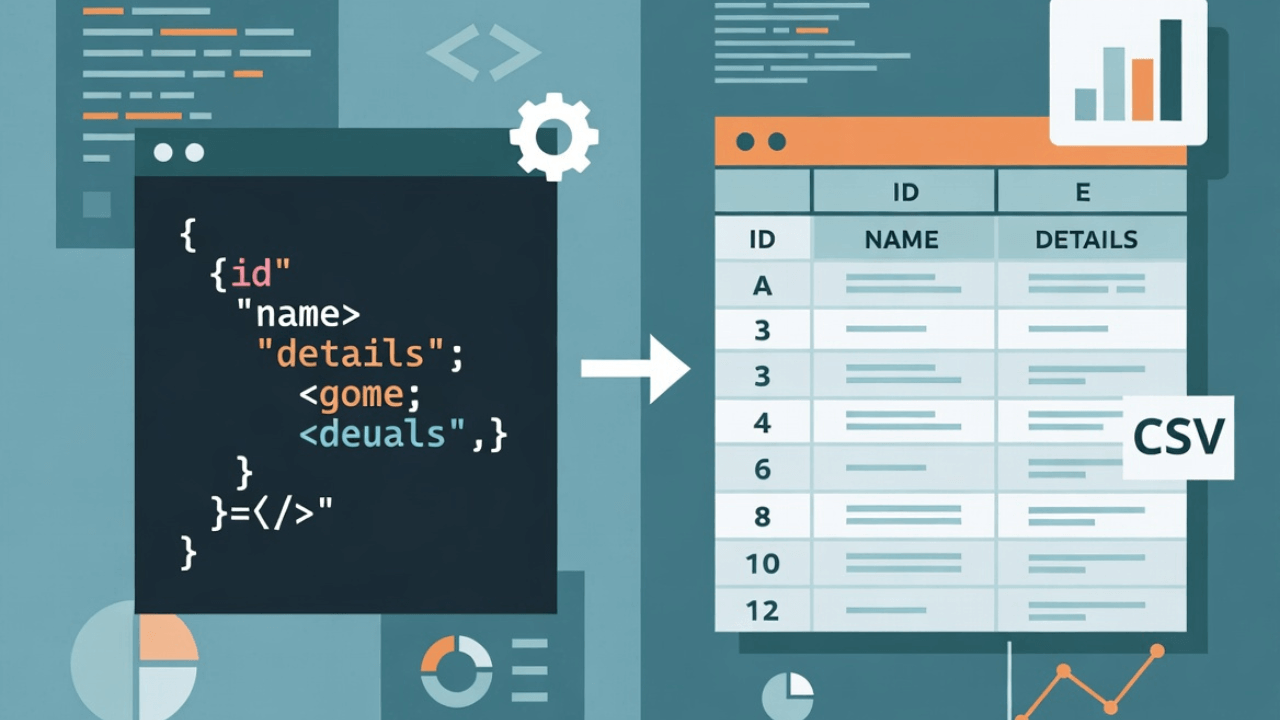

The problem is, they're not always compatible. JSON can have nested objects and arrays. CSV is flat—it's rows and columns, period. Converting between them requires some decisions about how to handle that complexity.

Converting JSON to CSV: The Basics

Let's start with the simpler direction: JSON to CSV. Here's a straightforward example:

Input JSON:

[

{

"name": "John Doe",

"age": 30,

"email": "john@example.com",

"city": "New York"

},

{

"name": "Jane Smith",

"age": 25,

"email": "jane@example.com",

"city": "San Francisco"

}

]

Output CSV:

name,age,email,city

John Doe,30,john@example.com,New York

Jane Smith,25,jane@example.com,San Francisco

Simple, right? Each JSON object becomes a row. Each key becomes a column header. But what happens when your JSON gets more complex?

The Nested Data Problem

Here's where it gets tricky. What if your JSON looks like this?

[

{

"name": "John Doe",

"age": 30,

"address": {

"street": "123 Main St",

"city": "New York",

"zip": "10001"

},

"hobbies": ["reading", "coding", "traveling"]

}

]

You can't just flatten this into CSV directly. You have a few options:

- Flatten the nested object:

address.street,address.city,address.zip - Stringify the nested data: Convert the entire address object to a JSON string

- Create multiple rows: One row per hobby (denormalize)

- Use a delimiter: Join the hobbies array with semicolons or pipes

There's no "right" answer—it depends on what you need. For Excel users, flattening usually works best. For databases, you might want to denormalize.

Method 1: Online Tools (The Quick Way)

For one-off conversions, online tools are unbeatable. No code, no setup, just paste and convert.

I use our CSV to JSON Converter for this all the time. It handles both directions, supports custom delimiters, and runs entirely in your browser (so your data never leaves your computer).

Here's how it works:

- Paste your JSON (or upload a file)

- Choose your options (how to handle nested data, delimiter preferences)

- Click convert

- Download or copy the CSV

Best for: Quick conversions, one-time tasks, when you don't want to write code.

Method 2: Python (The Programmer's Way)

If you're doing this programmatically, Python is the way to go. The pandas library makes it trivial:

import pandas as pd

import json

# Read JSON file

with open('data.json', 'r') as f:

data = json.load(f)

# Convert to DataFrame

df = pd.DataFrame(data)

# Handle nested objects by flattening

# If you have nested data, you might need:

# df = pd.json_normalize(data)

# Save to CSV

df.to_csv('output.csv', index=False)

For nested data, pandas.json_normalize() is your friend:

import pandas as pd

import json

with open('nested_data.json', 'r') as f:

data = json.load(f)

# This automatically flattens nested objects

df = pd.json_normalize(data)

# For arrays, you might need to explode them

# df = df.explode('hobbies')

df.to_csv('output.csv', index=False)

Best for: Batch processing, automation, when you need to transform the data during conversion.

Method 3: JavaScript/Node.js

If you're working in a JavaScript environment, here's a simple converter:

function jsonToCsv(jsonData) {

if (!Array.isArray(jsonData) || jsonData.length === 0) {

return '';

}

// Get headers from first object

const headers = Object.keys(jsonData[0]);

// Create CSV header row

const csvRows = [headers.join(',')];

// Convert each object to a CSV row

for (const row of jsonData) {

const values = headers.map(header => {

const value = row[header];

// Handle nested objects and arrays

if (typeof value === 'object' && value !== null) {

return JSON.stringify(value);

}

// Escape commas and quotes

if (typeof value === 'string' && (value.includes(',') || value.includes('"'))) {

return `"${value.replace(/"/g, '""')}"`;

}

return value;

});

csvRows.push(values.join(','));

}

return csvRows.join('\n');

}

// Usage

const jsonData = [

{ name: "John", age: 30, city: "New York" },

{ name: "Jane", age: 25, city: "San Francisco" }

];

const csv = jsonToCsv(jsonData);

console.log(csv);

For more complex scenarios, check out libraries like json2csv:

const { parse } = require('json2csv');

const fields = ['name', 'age', 'email'];

const opts = { fields };

const csv = parse(jsonData, opts);

Best for: Web applications, Node.js scripts, when you're already working in JavaScript.

Converting CSV to JSON: The Reverse Journey

Now let's flip it. CSV to JSON is usually simpler because CSV is flat, but you still need to make decisions.

Input CSV:

name,age,email,city

John Doe,30,john@example.com,New York

Jane Smith,25,jane@example.com,San Francisco

Output JSON:

[

{

"name": "John Doe",

"age": "30",

"email": "john@example.com",

"city": "New York"

},

{

"name": "Jane Smith",

"age": "25",

"email": "jane@example.com",

"city": "San Francisco"

}

]

Notice something? The age values are strings, not numbers. CSV doesn't preserve data types—everything is text. You'll need to manually convert types if that matters.

CSV to JSON: Python Approach

Again, pandas makes this easy:

import pandas as pd

# Read CSV

df = pd.read_csv('data.csv')

# Convert to JSON

json_data = df.to_json(orient='records', indent=2)

# Save to file

with open('output.json', 'w') as f:

f.write(json_data)

The orient='records' parameter gives you an array of objects, which is usually what you want.

If you need to convert data types:

import pandas as pd

df = pd.read_csv('data.csv')

# Convert specific columns to numeric

df['age'] = pd.to_numeric(df['age'], errors='coerce')

# Convert to JSON

json_data = df.to_json(orient='records', indent=2)

CSV to JSON: JavaScript Approach

Here's a simple JavaScript converter:

function csvToJson(csvText) {

const lines = csvText.split('\n');

const headers = lines[0].split(',');

const result = [];

for (let i = 1; i < lines.length; i++) {

if (!lines[i].trim()) continue; // Skip empty lines

const values = lines[i].split(',');

const obj = {};

headers.forEach((header, index) => {

obj[header.trim()] = values[index]?.trim() || '';

});

result.push(obj);

}

return JSON.stringify(result, null, 2);

}

Warning: This simple version doesn't handle commas inside quoted fields. For production use, use a proper CSV parser like papaparse:

const Papa = require('papaparse');

const csv = `name,age,email

John Doe,30,john@example.com`;

const result = Papa.parse(csv, {

header: true,

skipEmptyLines: true

});

const json = JSON.stringify(result.data, null, 2);

Common Gotchas and How to Avoid Them

I've made all these mistakes. Learn from my pain:

1. Commas in Data: CSV uses commas as delimiters, so if your data contains commas, you need to quote the field. Always use a proper CSV parser—don't try to split on commas manually.

2. Data Type Loss: CSV is text-only. Numbers become strings. Booleans become "true"/"false" strings. Dates become text. You'll need to manually convert types after importing.

3. Nested Data: JSON can have nested objects and arrays. CSV can't. You need to decide: flatten it, stringify it, or denormalize it. There's no automatic solution.

4. Encoding Issues: CSV files can have encoding problems, especially with special characters. Always specify UTF-8 encoding.

5. Empty Values: CSV represents empty values as empty strings. JSON can have null. Decide how you want to handle this conversion.

6. Headers: CSV usually has headers. Make sure your converter handles the first row correctly—as headers, not data.

Real-World Use Cases

Here are some scenarios where I've needed these conversions:

Data Migration: Moving data from a JSON API into a database that expects CSV imports. The flattening was the tricky part.

Reporting: Converting JSON API responses to CSV so the marketing team could analyze data in Excel. They needed it weekly, so I automated it.

Data Cleaning: Sometimes it's easier to clean data in Excel (find/replace, formulas) than in code. Convert to CSV, clean it, convert back to JSON.

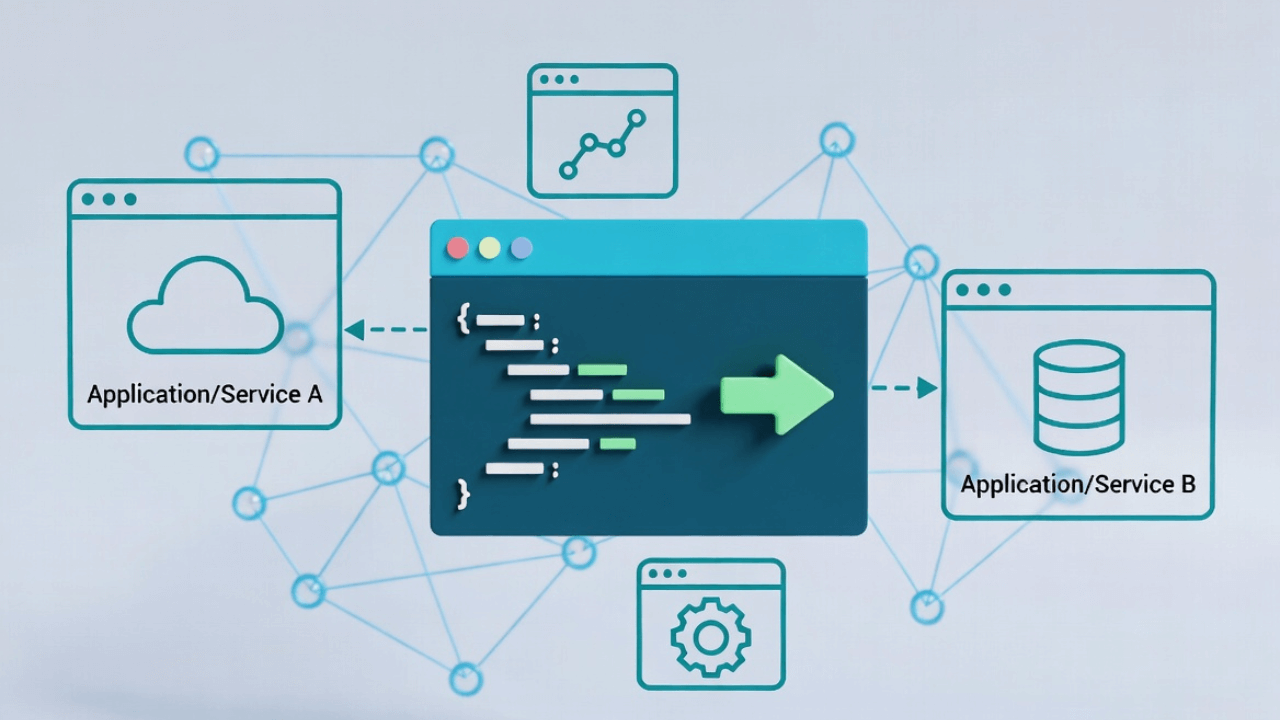

Integration: A client's system exported CSV, but our API expected JSON. We built a converter in the middle.

Backup: Converting JSON databases to CSV as a human-readable backup format. Not ideal, but sometimes you need something that will open in any text editor.

Best Practices

After doing this hundreds of times, here's what I've learned:

-

Always validate your data: Check that the conversion preserved all rows and columns. It's easy to lose data during conversion.

-

Handle edge cases: Empty values, special characters, nested data—test these scenarios.

-

Preserve metadata: If you're converting back and forth, you'll lose information. Consider keeping a mapping file or documentation.

-

Use proper parsers: Don't try to parse CSV with string splits. Use a library. It's not worth the bugs.

-

Test with real data: Your test data is probably clean. Real data is messy. Test with actual production data (anonymized, of course).

-

Consider the destination: If you're converting for Excel, think about what Excel can handle. Long text fields? Dates in a specific format? Plan ahead.

Frequently Asked Questions

How do I handle nested JSON when converting to CSV?

Flatten nested objects by creating columns like address.city or convert nested structures to JSON strings within CSV cells. For simple analysis, flattening works best. For complex data, consider keeping it as JSON within CSV or using multiple related CSV files.

Can I convert CSV back to JSON without losing data?

Simple flat CSV converts back perfectly. However, you lose data type information (everything becomes strings), nested structures (unless preserved as JSON strings), and null vs. empty string distinction. Document your conversion strategy for round-trips.

What's the best tool for batch JSON to CSV conversion?

For batch processing, use Python with pandas (df.to_csv() and pd.read_json()) or Node.js scripts with json2csv. These handle thousands of files efficiently and allow custom transformation logic.

How do I handle special characters in CSV?

Use proper CSV libraries that handle escaping automatically. Commas, quotes, and newlines within data must be escaped. Don't try to manually escape—use tested libraries like Python's csv module or JavaScript's papaparse.

Should I use JSON or CSV for data storage?

JSON is better for hierarchical data, APIs, and configuration. CSV is better for tabular data, spreadsheet analysis, and simple datasets. Use JSON for flexibility, CSV for simplicity. Learn more in our JSON basics guide.

Real-World Use Cases

JSON-CSV conversion solves practical problems across industries:

Data Analysis: Import API data (JSON) into Excel/Tableau (CSV) for business intelligence dashboards and reporting.

Database Imports: Many databases import CSV easily. Convert JSON exports from NoSQL databases to CSV for SQL database imports.

Legacy System Integration: Old systems often require CSV. Modern APIs return JSON. Conversion bridges the gap between legacy and modern systems.

Data Backup: JSON is great for structured backups, but CSV is human-readable without special tools—useful for disaster recovery scenarios.

For related tasks, see working with nested JSON, JSON payloads, and opening JSON files.

External Resources

- RFC 4180: CSV Format - Official CSV specification

- Pandas Documentation - Python data manipulation

- PapaParse - JavaScript CSV parser

- MDN: JSON - Working with JSON

The Bottom Line

Converting between JSON and CSV isn't rocket science, but it's not trivial either. The format mismatch (nested vs. flat) means you'll always need to make decisions about how to handle complex data.

For quick, one-off conversions, use an online tool like our CSV to JSON Converter. It's fast, handles edge cases, and keeps your data private.

For automation or batch processing, use Python with pandas or JavaScript with a proper CSV parser. Don't try to build your own parser—you'll regret it when you hit edge cases.

The key is understanding the limitations of each format and making informed decisions about how to bridge the gap. Once you've done it a few times, it becomes second nature.

And remember: there's no shame in using a tool. I've written converters from scratch, and I still reach for online tools when I just need to convert something quickly. The best tool is the one that gets the job done with the least friction.

Want to try it yourself? Head over to our CSV to JSON Converter and paste in some data. You'll see how it handles nested objects, arrays, and all those edge cases that make manual conversion a pain. For more JSON tools, check out our JSON Formatter and guides on JSON formatting, JSON validation, and parsing JSON in JavaScript.

About Kavishka Gimhan

Passionate writer and content creator sharing valuable insights and practical advice. Follow for more quality content and updates.